Launched on February 10, 2026, Seedance 2.0 has already sent ripples across the creative industry, with its cinematic output quickly going viral on X (formerly Twitter). Yet, this groundbreaking generative AI video model, developed by ByteDance, has also ignited a fierce debate around copyright, prompting cease-and-desist letters from major studios and raising critical questions for creative decision-makers. For studio owners, marketers, and creative directors navigating the rapidly evolving landscape of AI video production, understanding Seedance 2.0 is no longer optional—it's essential for strategic planning.

What is Seedance 2.0? The Next-Gen AI Video Platform

Seedance 2.0, developed by the innovative minds at ByteDance, the parent company behind TikTok, officially debuted on February 10, 2026. This significant launch began as a limited beta on the Jimeng AI platform, quickly moving to a stable release within the same month. While primarily available in China, its influence is expanding, with integrations already seen in AI platforms like Dreamina, a robust software suite akin to CapCut. The model is also anticipated to be "coming soon" to global creative hubs like Artlist and fal.ai, signaling its intent for broader adoption.

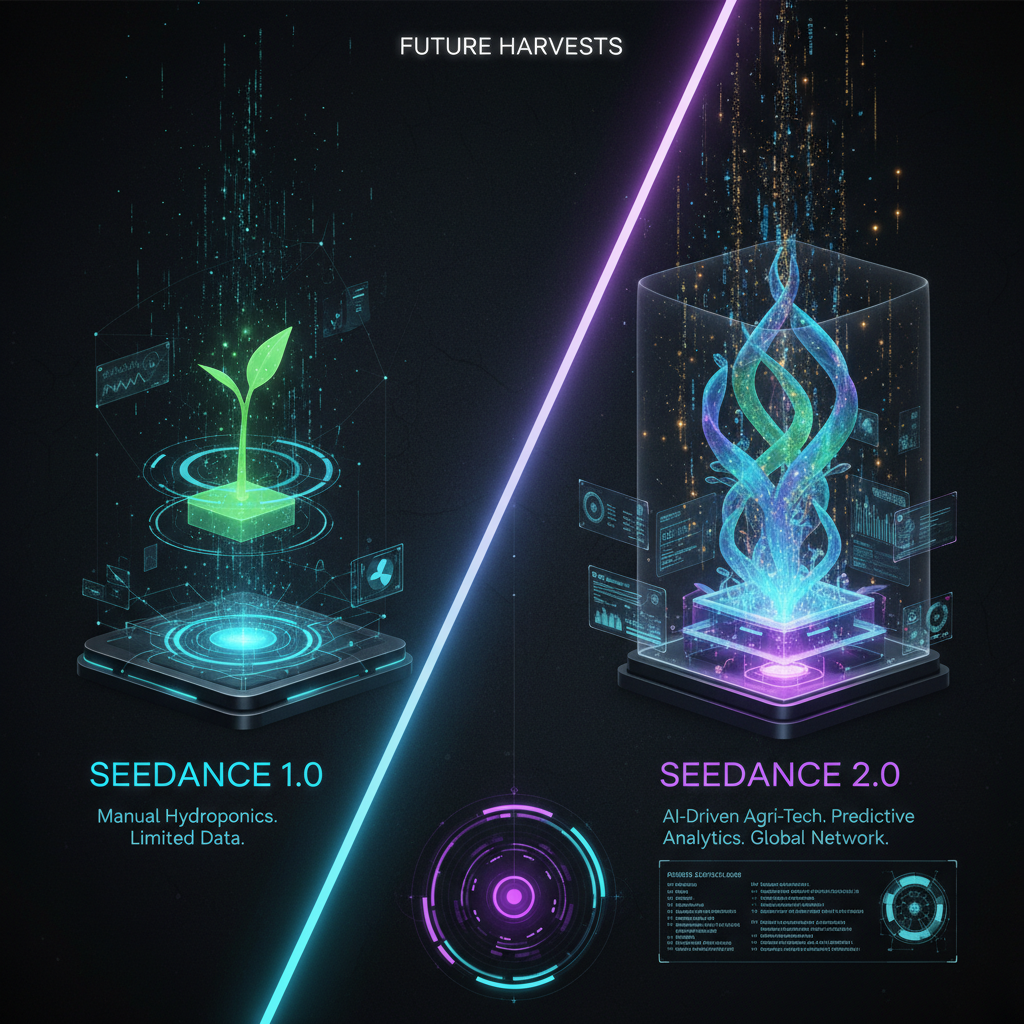

At its core, Seedance 2.0 is a sophisticated generative AI video model designed to produce short, cinematic clips. These videos typically range from 4 to 15 seconds, with the inherent capability to extend into longer, multi-shot narratives exceeding 15 seconds. What truly sets this platform apart is its revolutionary approach to creative control. Seedance 2.0 represents a profound shift from the rudimentary "prompting" seen in earlier AI video tools to offering comprehensive "director-level controls." This empowers users with an unprecedented command over critical cinematic elements such as motion, lighting, framing, and, crucially, character consistency within the generated content, transforming the user experience from simply describing to truly directing.

Unpacking Seedance 2.0's Groundbreaking Features & Capabilities

The feature set of this platform is meticulously crafted to empower professional creators and studios, pushing the boundaries of what is possible with AI video. Its capabilities are designed to address many of the limitations prevalent in previous generative AI models, making it a compelling tool for high-stakes production environments.

The most celebrated innovation is its Director-Level Controls. This functionality moves beyond simple text prompts, giving users extensive command over nuanced aspects like camera movement, light manipulation, shot composition, and maintaining visual integrity for characters and objects. This level of precision allows creative teams to imbue their AI-generated content with intentional artistic direction, previously only achievable with manual post-production.

Furthermore, the platform excels with its Multimodal Input & Long-Form Potential. It supports a highly flexible input system, allowing users to combine up to 12 distinct files across four modalities: text prompts, reference images, existing video clips, and audio inputs. This rich data fusion enables the generation of highly specific and contextually relevant short cinematic clips, typically 4-15 seconds in length. The model’s underlying architecture also holds significant potential for crafting longer, multi-shot narratives that extend beyond 15 seconds, a crucial development for more complex storytelling.

A critical differentiator is the platform's Native Audio-Visual Generation. Unlike many competitors, including earlier iterations of Sora or Runway, which often generate video and then attempt to dub audio, Seedance 2.0 employs a proprietary "Dual-Branch Diffusion Transformer" architecture. This sophisticated system processes spatiotemporal tokens (for video) and waveform tokens (for audio) in parallel, concurrently generating synchronized native audio and sound effects, including basic lip-sync. This integrated approach significantly mitigates the common synchronization issues and unnatural soundscapes that plague models relying on post-video audio dubbing.

For professional consistency, the model offers Multi-Shot Consistency & Physics-Based Realism. Built upon a new diffusion transformer architecture, Seedance 2.0 is engineered to respect physical properties such as gravity, friction, and momentum, resulting in more believable and immersive video content that adheres to real-world physics and maintains character appearance, scene elements, and overall visual style across multiple cuts.

Finally, this model boasts impressive High-Resolution Output & Efficiency. It delivers crisp, high-definition video output up to 1080p and supports resolutions up to 2K. Beyond raw quality, ByteDance claims an 80% reduction in wasted compute and an astounding 90% usable output rate. This is a dramatic improvement over the estimated industry average of approximately 20% usable output, signifying a leap towards higher production reliability and reduced iteration time for creative studios.

I Got Access To Seedance 2.0 ( Exclusive Demo ) — Planet Ai

Seedance 2.0 Pricing: Navigating the Cost of Next-Gen AI Video Generation

Understanding the cost model for this cutting-edge platform requires a nuanced perspective, as ByteDance's initial rollout presents somewhat ambiguous pricing information. While some early reports and promotional materials describe the platform as "free to use" or a "free Seedance 2.0 AI video generator," this likely pertains to basic access, trial periods, or specific platform integrations. For serious professional use and high-volume workflows, a more structured cost model appears to be in place.

Industry insights suggest a per-generation cost, with claims indicating the platform at approximately "$0.50 per generation." This is a highly competitive rate, especially when compared to other leading AI video generators that often fall in the "$1.00–$2.50" per generation range. ByteDance further substantiates its value proposition with claims of 80% reduction in wasted compute, directly translating to significant cost efficiencies for studios producing extensive content. This positions Seedance 2.0 as a highly cost-effective solution for creative agencies looking to scale their AI video output without incurring prohibitive expenses.

Currently, this model is integrated into various AI platforms, notably Dreamina, and is announced as "coming soon" to other platforms such as Artlist and fal.ai. This distribution strategy suggests that the precise pricing structure might vary depending on the partner platform, potentially offering different tiers or credit-based systems. Decision-makers should closely monitor these integrations for definitive pricing details as Seedance 2.0 expands its global footprint.

To provide a clearer picture, here’s a comparison of current AI video tool pricing as of March 4, 2026:

| Tool Name | Pricing Model & Details (2026) | Notes |

|---|---|---|

| Seedance 2.0 | Estimated ~$0.50 per generation (for high-volume usage). Described as "free to use" for basic access or through certain platform integrations. | Cost-efficient; exact tiered pricing subject to platform integration. |

| Runway | Free: Limited generations, 125 seconds video, 25 image generations. Standard: $15/month (billed annually) for 625 seconds. Pro: $35/month (billed annually) for 1250 seconds. Unlimited: $95/month (billed annually) for 2500+ seconds (billed in 500-second increments). | Well-established tiered credit-based system. |

| Pika | Free tier with limited generations. Paid plans typically start around $8-$10/month for increased generations and features. | Tiered pricing, specific 2026 details for paid plans not definitively available. |

| Luma AI (Genie) | Pricing details not yet widely available as of February 2026 launch; currently in early access. | New offering, public pricing not announced. |

| Sora (OpenAI) | Not yet publicly available for direct purchase or widespread access as of March 2026; remains in limited research preview. No public pricing announced. | High anticipation, but no commercial access or pricing. |

| Kling (Kuaishou) | No publicly announced pricing or direct access information found as of March 2026. | Limited public information. |

| Veo (Google) | Currently in private preview as of March 2026. No public pricing information available. | Google's offering, private preview. |

| Minimax Hailuo | No specific AI video generation tool pricing found under "Minimax." General AI services may exist but are not directly comparable to video generation tools mentioned here. | Focus primarily on general AI services, not dedicated video generation pricing. |

Seedance 2.0 vs. The Competition: A Head-to-Head Look at AI Video Models

When evaluating generative AI video platforms, this model stands out with several key differentiators that position it as a formidable contender against established players and highly anticipated newcomers. Its architectural foundations and user-centric controls offer distinct advantages that address common pain points in AI video production.

A primary competitive edge lies in its Architectural Advantage (Native A/V Generation). Unlike many other tools, including even "earlier iterations of Sora or Runway," which frequently generate video frames first and then dub audio in a separate, often asynchronous process, Seedance 2.0 employs a "Dual-Branch Diffusion Transformer." This innovative architecture is designed to process spatiotemporal tokens (for visual elements) and waveform tokens (for audio) in parallel. The result is the simultaneous generation of fully synchronized audio and video, complete with basic lip-sync capabilities. This eliminates the prevalent sync issues and unnatural sound design often found in models that rely on post-generation audio integration.

In terms of creative command, Seedance 2.0 asserts Structure Control Leadership. ByteDance claims that Seedance 2.0 "outperforms Sora 2 and Veo 3" in structural control, offering what it describes as "industry-leading structure control." This implies a level of granular direction over camera angles, character positioning, and scene progression that surpasses typical text-to-video tools, effectively moving generative video production beyond the unpredictable "slot machine" era.

Another crucial metric for studios is the Usable Output Rate. ByteDance reports an impressive 90% usable output rate for Seedance 2.0. This figure represents a monumental leap compared to the estimated "industry average of ~20%" for other AI video generators. A higher usable output rate directly translates to fewer wasted compute cycles, less time spent regenerating clips, and significantly improved efficiency for professional workflows, boosting ROI for studios.

The model also prioritizes Creative Control & Multimodal Reference. Its "director-level controls" are complemented by a powerful "multimodal reference system" that leverages @ tags for incorporating images, video clips, and audio into prompts. This system provides more precise creative direction than traditional text-only inputs, allowing creators to define the exact look, feel, and sound of their desired output with unprecedented accuracy.

Here’s a comparative overview of Seedance 2.0’s key differentiators:

| Feature / Aspect | Seedance 2.0 | Other Leading AI Video Tools (e.g., Sora, Runway) |

SeeDance 2.0: The Next Level of AI Video — And What It Means for Local AI Users? — Benji’s AI Playground

| :------------------- | :----------------------------------------------------------------------------------------------------------------------------------------------- | :--------------------------------------------------------------------------------------------------------------------------- | | Audio-Video Gen. | Native, simultaneous audio and video generation with basic lip-sync (Dual-Branch Diffusion Transformer). | Often generates video first, then dubs audio, leading to potential sync issues. | | Structure Control | "Director-level controls" and "industry-leading structure control"; claimed to outperform Sora 2 and Veo 3 in this aspect. | Varies; often more prompt-based, less granular control over camera, motion, and consistency across shots. | | Usable Output Rate | Reported 90% usable output rate (80% reduction in wasted compute). | Estimated industry average of ~20% usable output rate. | | Consistency | Multi-shot consistency for characters, scene elements, and visual style. Physics-based realism. | Maintaining consistency across multiple shots can be a significant challenge. | | Input Modalities | Multimodal: Text, images, video references, audio (up to 12 files). | Primarily text-to-video or image-to-video, with fewer deep multimodal integration options for creative direction. | | Availability | Limited beta launched Feb 2026; integrated into Jimeng AI, Dreamina. Primarily available in China; "coming soon" to Artlist, fal.ai. | Varies; some (Runway, Pika) are widely available; others (Sora, Veo, Luma Genie, Kling) remain in private preview or limited access as of March 2026. |

Real-World Seedance 2.0 Use Cases for Modern Creative Studios

The advanced capabilities of this platform unlock a myriad of practical applications across diverse industries, making it an invaluable tool for modern creative studios. Its precision and efficiency are poised to redefine production workflows and deliver compelling visual content at scale.

In Advertising & E-commerce, this platform is highly touted for creating dynamic product videos, advertisements, and promotional content. Studios can leverage its realistic generation capabilities to produce detailed product clips, demonstrate item functionality, and craft engaging visuals that significantly enhance online presence. Industry data suggests that AI video can boost ROI by 82% over traditional methods and cut costs by 70-90% without compromising quality, making this model a game-changer for e-commerce brands seeking higher conversion rates.

For Film & Media Production, this platform enables the rapid production of short cinematic clips, music videos, and independent films. Its "director-level controls" are particularly appealing for professional studios aiming to craft intentional, polished, and consistent video content. Even outlandish action sequences, typically resource-intensive to produce, can be generated with impressive realism and control using Seedance 2.0, streamlining pre-visualization and concept development.

Social Media & Content Creation is another area where Seedance 2.0 shines. It is ideal for generating high-definition AI reels, shorts, and captivating content optimized for various social media feeds, stories, YouTube, and landing pages. The ability to quickly produce professional-quality, attention-grabbing videos allows content creators to maintain a consistent and high-volume publishing schedule, a crucial factor in today's digital landscape.

For sophisticated Visual Storytelling & Marketing, the model empowers creators to build coherent narrative beats—from hooks to actions and payoffs—through consistent multi-shot sequences. It transforms static text or images into professional-quality videos with cinematic aesthetics, enabling brands to tell their stories more vividly and effectively.

Furthermore, Seedance 2.0 can be effectively utilized for creating engaging and dynamic App & Website Backgrounds. These captivating visual elements can significantly enhance user experience and engagement on digital platforms.

Discover how leading agencies are integrating AI video into their workflows by exploring our directory of top-tier AI video production studios at StudioIndex.ai/studios.

The Seedance 2.0 Controversy: Copyright, Ethics, and Creator Concerns

Despite its technological prowess, the launch of Seedance 2.0 in mid-February 2026 was immediately embroiled in significant controversy, sparking an intense debate about copyright, intellectual property, and the ethical implications of AI training data. This discussion has brought critical questions to the forefront for creative industries and decision-makers alike.

The model faced swift and vocal condemnation from major industry players, including the Motion Picture Association (MPA), The Walt Disney Company, and Paramount Skydance. These entities collectively accused ByteDance of egregious copyright infringement, alleging that Seedance 2.0 was trained extensively on vast quantities of copyrighted works—including iconic franchises like Marvel, Star Wars, Star Trek, and South Park—without proper licensing, compensation, or permission from the rights holders. This culminated in Disney dispatching a formal cease-and-desist letter on February 13, 2026, demanding immediate action.

The controversy quickly ignited widespread Creator Anxiety across the globe. Screenwriter Rhett Reese, known for co-writing Deadpool & Wolverine, publicly reacted to the viral clips generated by Seedance 2.0 with a stark social media post: "I hate to say it. It's likely over for us." This sentiment reflects a deep-seated fear among traditional creatives about job displacement and the devaluing of human artistry in an era of increasingly sophisticated generative AI.

Adding to the industry's outcry, the powerful actors' union SAG-AFTRA also issued a strong condemnation. The union highlighted "blatant infringement" and "unauthorized use of our members' voices and likenesses," underscoring critical concerns over consent, fair compensation, and the exploitation of human talent by AI models.

In response to the escalating allegations and industry pressure, ByteDance issued a statement on February 16, 2026. The company pledged to strengthen safeguards against intellectual property violations, although specific measures and their implementation timelines are still pending clarification. The ongoing nature of this controversy underscores the urgent need for robust legal frameworks and ethical guidelines in the rapidly evolving landscape of AI video production.

NEW Seedance 2.0 is INSANE! — Julian Goldie SEO

The Future of AI Video Production with Seedance 2.0

The advent of Seedance 2.0 signifies a pivotal moment in the evolution of AI video production. Its comprehensive "director-level controls," innovative native audio-visual generation, and remarkable 90% usable output rate are not merely incremental improvements; they represent a fundamental shift towards more professional-grade, reliable, and creatively controllable AI tools. This technology is propelling the industry beyond rudimentary text-to-video generation, demanding significantly less post-processing and opening new avenues for efficiency and artistic expression.

However, the powerful capabilities of this model are inextricably linked to the ongoing copyright controversy. This intense debate underscores the critical and immediate need for the development of robust ethical frameworks and fair compensation models within the AI ecosystem. For StudioIndex.ai, it highlights the responsibility of creators and developers to prioritize transparency, consent, and equitable practices in the training and deployment of generative AI.

For studio owners, creative directors, and marketing leaders, strategic adoption of technologies like Seedance 2.0 requires careful consideration. Decision-makers must weigh the unparalleled creative capabilities and significant efficiency gains against the evolving ethical implications and the complex legal landscape. Navigating this new frontier effectively may involve forming partnerships with specialized AI studios that possess the expertise to leverage these tools responsibly and to mitigate potential legal risks.

The transformative potential of Seedance 2.0 is undeniable, promising a future where high-quality video content can be conceived and executed with unprecedented speed and precision. Yet, its journey also illuminates the critical need for a collaborative approach between technologists, artists, and policymakers to ensure that innovation serves creativity ethically and sustainably. Stay ahead in the rapidly evolving AI video landscape by connecting with innovative studios specializing in generative AI. Explore our comprehensive directory at StudioIndex.ai/studios.

FAQ Section

Q: What is Seedance 2.0?

A: Seedance 2.0 is a cutting-edge generative AI video model developed by ByteDance, the parent company of TikTok. Officially launched on February 10, 2026, this advanced platform allows users to create short, cinematic video clips, typically ranging from 4 to 15 seconds, with potential for longer narratives. It leverages a combination of text prompts, image references, existing video clips, and audio inputs. A key differentiator of Seedance 2.0 is its provision of "director-level controls," enabling users to have unprecedented command over elements like motion, lighting, framing, and character consistency within the generated content, marking a significant evolution in AI video production tools.

Q: When was Seedance 2.0 officially launched?

A: Seedance 2.0 was officially launched as a limited beta on February 10, 2026, initially on the Jimeng AI platform, a key component of ByteDance's AI ecosystem. A stable public release swiftly followed within the same month, demonstrating ByteDance's rapid deployment strategy. While its initial availability was primarily focused on the Chinese market, Seedance 2.0 quickly gained attention globally due to viral cinematic outputs. The model has since been integrated into various other ByteDance AI platforms, most notably Dreamina, a robust creative software suite, and is anticipated to be "coming soon" to international creative hubs such as Artlist and fal.ai. This expansion highlights ByteDance's ambition for Seedance 2.0 to become a global standard in AI video generation, despite the ongoing debates regarding its training data and ethical implications.

Q: How much does Seedance 2.0 cost to use?

A: The pricing model for Seedance 2.0 is currently somewhat ambiguous. While some early reports describe it as "free to use" or a "free Seedance 2.0 AI video generator," other information suggests a cost model for more extensive or high-volume usage. Estimates indicate a potential cost of approximately "$0.50 per generation." This pricing structure implies a possible freemium model, offering basic access for free while charging for advanced features, higher usage volumes, or access through specific integrated platforms. ByteDance also highlights an 80% reduction in wasted compute and a claimed cost efficiency over competitors' rates of "$1.00–$2.50" per generation.

Q: What makes Seedance 2.0 different from other AI video generators like Sora or Runway?

A: Seedance 2.0 differentiates itself from competitors like Sora and Runway primarily through its unique "Dual-Branch Diffusion Transformer" architecture. This enables native, simultaneous audio and video generation, including basic lip-sync, which means audio is created concurrently with the visuals, avoiding common synchronization issues found in models that dub audio post-video generation. Furthermore, Seedance 2.0 offers "director-level controls" for precise creative input and boasts a reported 90% usable output rate, a significant improvement over the estimated industry average of ~20%. It also emphasizes multi-shot consistency and physics-based realism for more cohesive narratives.

Q: What are the main controversies surrounding Seedance 2.0?

A: Seedance 2.0's launch in February 2026 was immediately met with significant controversy, primarily revolving around alleged copyright infringement. The Motion Picture Association (MPA), The Walt Disney Company, and Paramount Skydance accused ByteDance of training the model on copyrighted works, such as content from Marvel, Star Wars, and South Park, without obtaining proper permission or offering compensation. Disney sent a cease-and-desist letter on February 13, 2026. This ignited widespread debate among creators and unions like SAG-AFTRA about intellectual property rights, job displacement, and the ethical practices of AI model training. ByteDance responded on February 16, 2026, pledging to enhance safeguards against intellectual property violations.

Related Posts

AI in Hollywood: Transforming Video Production

AI is revolutionizing Hollywood video production in 2026. Discover top tools like Sora 2, Runway Gen-4, and Kling 2.6, their pricing, and impact on filmmaking. Find AI studios here.

Read more

The 10 Best AI Video Production Studios in 2026

A curated guide to the top AI video production studios for brands, agencies, and marketing teams. Covering AI-native studios, AI-augmented agencies, and specialist freelancers — with pricing, tools used, and what each studio does best." author: "StudioIndex Editorial

Read more

AI Video Tools Compared: Features, Pricing & Quality in 2026

Unlock the best AI video tools for your studio. Our 2026 AI video tools comparison covers pricing, features, and quality for Runway, Sora, Pika, and more. Elevate your production today!

Read more